Triptych film and voice synthesis generated with machine learning.

This film is an attempt to explore and understand how machine learning tools ‘think’ and ‘learn’ and how that impacts what they make. The story has been written intandem with generating the visuals so that the story creates the visuals and the visuals in turn influence the writing of the story.

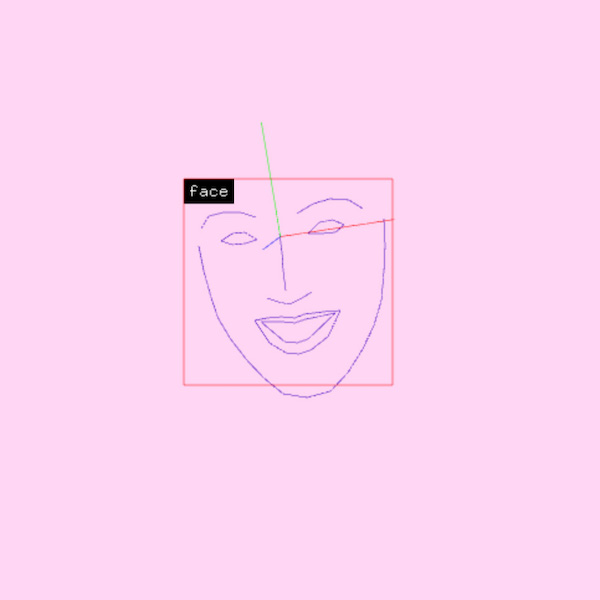

The visuals in this film were created using a computational architecture comprising two parts: an ‘artist’, generating thousands of images based on a series of words/phrases (yellow captions), and a ‘critic’ scoring and making selections from these images. The story has been written in tandem with generating the visuals which influence the subsequent sequence of the writing. Each panel simultaneously presents an alternative interpretation of the same story.

The artist and critic were trained using millions of images scraped from the internet that were manually annotated and categorised by human workers of Amazon’s Mechanical Turk marketplace earning an average wage of $2/hour.

These images are our images; interpreted and re-represented beyond our control or intention in an attempt to organise or map a world of objects. This is a political exercise with the consequence of perpetuating and amplifying cultural prejudices and biases that are enmeshed within the dataset. This system favours universalism over plurality and is already shaping a presentation of our world and transforming the future.

Visuals generated using VQGAN+CLIP with an ImageNet model. Audio narration generated using text to speech synthesis of the artist's voice

Marisa Di Monda is an Australian-Italian digital media artist. With a background in web and studying philosophy, their practice is research based and uses computation and digital technologies to creatively examine and engage with cultural and political issues, Human Computer Interaction and how structures of control within society influence cultural narratives and the way we construct knowledge.